Grade inflation

Meet Lytro.

According to the company that makes it, Lytro (which retails for $400-500) is a new kind of digital camera based on the emerging science of “light field photography.” What that means in English is that unlike conventional cameras, which record only a single amount of light in an image, Lytro records the color, intensity and vector direction of every light ray. This information is all stored within the image, which is then processed by a computer (either in the camera, or on a viewing device like a PC) to create an image in which you can change the focus after you take the picture.

So that’s what Lytro is. But according to today’s review at The Verge, one thing that Lytro isn’t is ready for prime time:

[O]ne of my biggest wishes for the Lytro is that it were built a bit more like a traditional camera. I never really got used to holding the Lytro, and I’d happily accept a larger body if it meant a slightly more ergonomically friendly design… The Lytro’s also hard to hold steady, since you don’t really get a grip or a way to balance your hands against each other, and pressing the power button always makes you push the camera down a bit…The Lytro’s display is by far the worst thing about this camera. First of all, it’s tiny: 1.46 inches diagonal. Second, it’s kind of terrible…

I had to charge the Lytro about every 400 shots, which isn’t great…

Here’s the thing about the Lytro: for a particular type of shot, it does a better job of capturing a photo than anything I’ve ever used… Unfortunately, that’s the only situation in which the Lytro really shines (which explains why the company’s example shots are all alike)…

In anything other than perfect lighting, photos were consistently grainy and noisy, not to mention just dark since you can’t really control the shutter speed or aperture — there’s not even a flash on the camera to poorly solve that problem…

Getting photos from your camera to your computer takes a long time. Each photo weighs in at about 16MB, and there’s also a fair amount of processing to be done on the computer before a shot is ready to be used; I imported 72 photos, and it took more than 20 minutes before the photos were available…

[T]he first iteration of the Lytro isn’t quite there yet: it’s hard to use, its display is terrible, and outside of a few particular situations its photos aren’t good enough to even be worth saving. It’s not even close to being able to replace an everyday camera, and at $399-$499, for most people it would have to.

Basically the only good things the reviewer, David Pierce, could find to say about the thing were:

- The dynamic focus gimmick is kind of cool;

- They promise everything else will get better eventually.

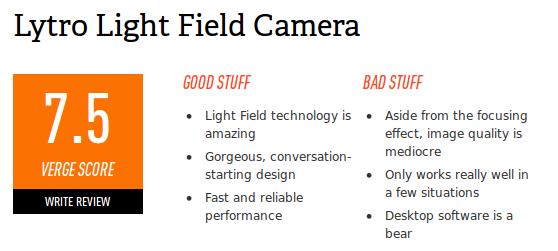

So, not a stellar review. But then I get to the end, where The Verge puts its numerical review scores (which are measured on a scale from 1 to 10, with 1 being “utter garbage” and 10 being “perfect”), and I see this:

7.5? Out of ten? After that review?

For reference, here’s how The Verge’s own guide to their rating system describes scores in the 7 range: “Very good. A solid product with some flaws.”

I’m not sure how anyone could read the same review I did and come away with the impression that Lytro is “a solid product.” The review I read made it sound like Lytro was a novelty device, and is going to stay that way until its makers figure out how to turn the tech behind it into something more broadly useful.

Hey, maybe they will some day! Who knows? But until they do, The Verge should be embarrassed by this review. Either the review text is correct, or the score is correct, but they can’t both be correct.

I say this not to pick on The Verge (well, not entirely, anyway.) I like The Verge a lot, and generally find their articles and reviews well-written and thoughtful. I bring it up to make a broader point — how infrequently you will ever really see a review of a tech product come flat out and say “don’t buy this.”

The Lytro review — the text, anyway — comes close. You can tell that Pierce doesn’t think the device is ready for the mass market, or for anybody really beyond photo nerds who want to play with its one interesting trick. But if you translate that sentiment into a numerical score using The Verge‘s scoring guidelines, you get a score somewhere between 3 (“Not a complete disaster, but not something we’d recommend.”) and 5 (“Just okay.”). Which sounds bad, on a scale of 1 to 10. It’s harsh! So they look harder at the device and, mirabile dictu, they find enough redeeming qualities to bump it up to a much more pleasant-sounding score of 7.5.

A 7.5 doesn’t say “don’t buy this.” It says “You may or may not want to buy this, we dunno.” It’s a pulled punch; a score low enough so they can say they didn’t recommend it without reservations, but not so low that the folks at Lytro will be tempted to come burn their offices down.

A 7.5, in other words, is a score that Lytro can live with. Which is great for Lytro. For potential Lytro customers, though, not so much.

You see this a lot in tech reviewing; too much, really. Even the worst stinkburgers get the “cautious optimism” treatment. Tech reviewers live in Lake Wobegon, where all the products are above average.